Building an AI pipeline for a rural healthcare NGO in West Bengal

A Bengali healthcare NGO, 25,000 calls a month, and what a transcription benchmark exposed

Originally published on LinkedIn on March 15, 2026.

For me it was my college WhatsApp group. Beyond the usual socialization, alumni meets, sports and politics — a friend posted asking for help with AI. She volunteers for a healthcare NGO in West Bengal and her team was getting inundated with over 25,000 Bengali patient feedback calls per month.

My friends suggested ElevenLabs, Twilio, voice agents — all good suggestions, but the sad reality was this wasn’t a technical user group, and an NGO can’t afford any of that.

I got intrigued and asked to be connected.

The NGO was trying to push Bengali voice recordings into Gemini for translation, but it was causing more grief than helping. When I actually spoke to them I realized translation was just one problem, not the only problem. The other issue was actionable clinical intelligence. One exacerbated the other.

Nobody knew which clinics were underperforming. Nobody knew that chronic condition patients — diabetics, hypertensives, people on maintenance meds — are the ones who actually need follow-up calls, not everyone. The team was calling all patients equally and couldn’t understand why it wasn’t working.

So I built something. Pro bono, using Claude Code.

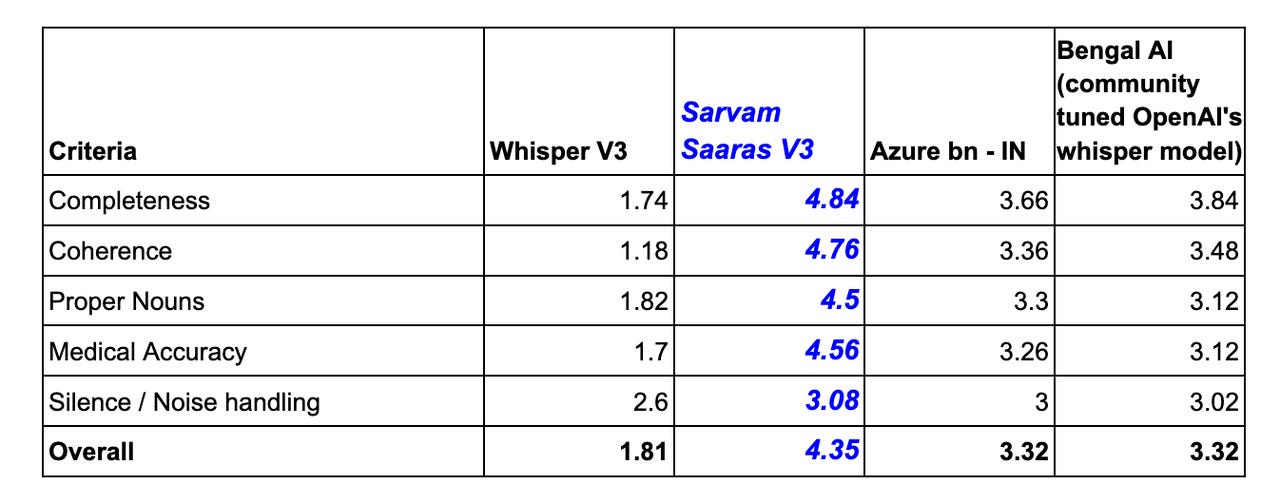

Before picking a transcription engine I ran a benchmark — four engines, same 50 real calls, rural West Bengal Bengali recorded on basic phones. Scored across five criteria: completeness, coherence, proper noun accuracy, medical content accuracy, and handling of noise and dropped calls.

Same audio. Very different results.

I expected Whisper V3 to win. It didn’t. Sarvam AI’s Saaras V3 was the clear winner — and the nuance was that it actually understood rural dialects, not just standard Bengali. That gap matters when clinical data is in the mix. A misheard medication name or a mangled outcome is a wrong decision downstream

The pipeline transcribes each call, extracts structured fields — recovery status, medication dropout, access barriers, whether the patient sought treatment elsewhere, whether they're on a maintenance condition — and surfaces everything by clinic in a dashboard. Patterns across thousands of calls, not summaries read one at a time.

Expected outcomes: 13pp reduction in medication dropout, 85% improvement in clinical escalation time, 2-3x increase in access barrier identification.

Open source, self-hostable, bring your own API. Any health NGO can deploy this on nonprofit cloud credits.

Still in progress but working.