The Four Levels of Knowing If Your AI Product Actually Worked

The Smile Signal

Originally published on LinkedIn on March 28, 2026.

There’s a conversation happening in AI about how to measure whether an AI agent actually helped someone. New frameworks, scoring systems, startups — Microsoft just introduced a Multimodal Agent Score (Dynamics 365 Blog, Feb 2026) to holistically evaluate AI agents across understanding, reasoning, and response quality.

It’s a good conversation. But I think it’s a much older question wearing a new outfit.

Every product team has wrestled with the same thing: did what we built actually work for the person using it? And the answer has always come in layers.

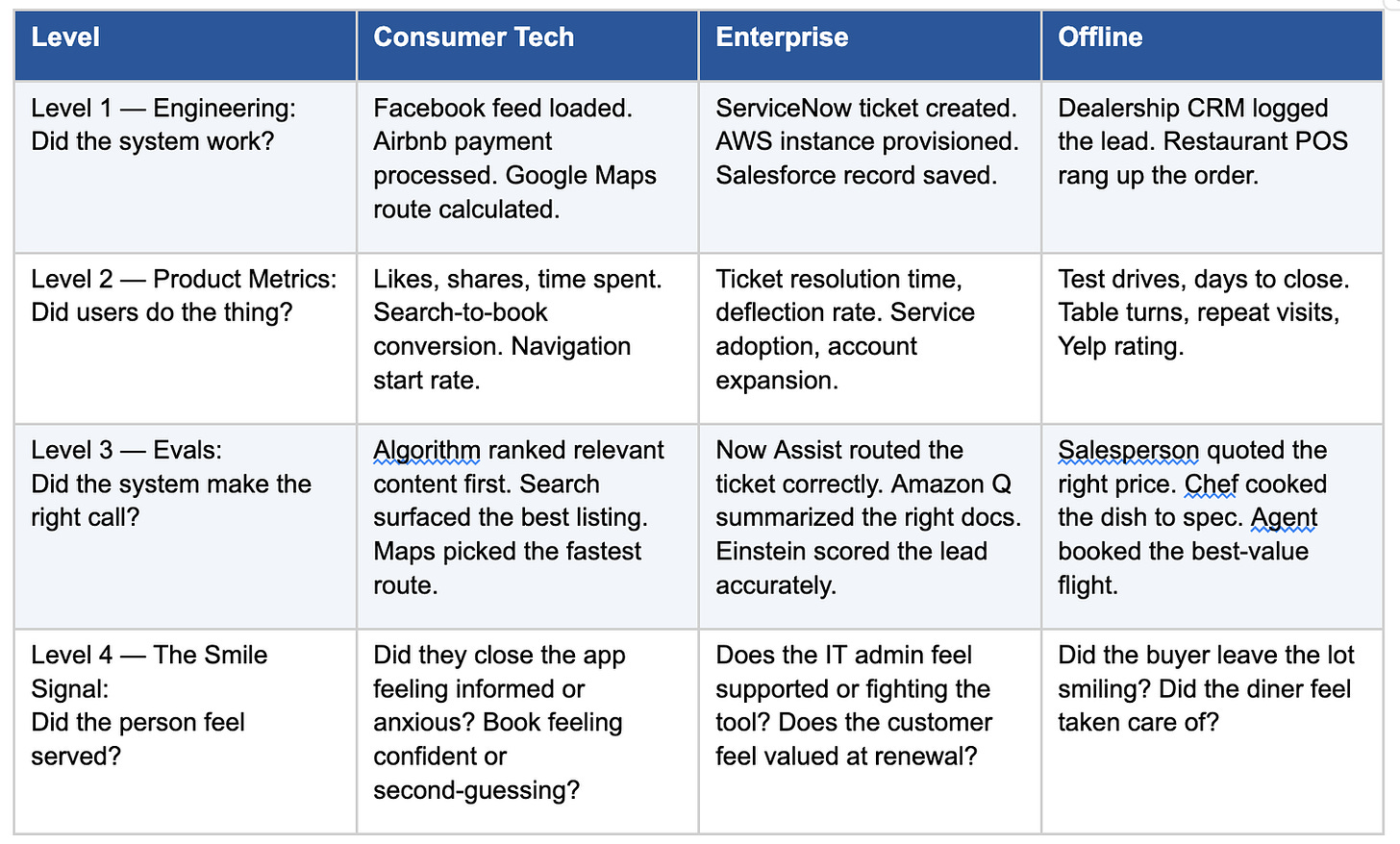

The Measurement Stack

Level 1 is solved. Level 2 is where most teams live. Level 3 is where the eval industry is printing money — LangSmith, Braintrust, Arize. But all of that still tells you if the agent did the right thing. Not whether the person felt served.

Why AI agents break the old model

In traditional products, Level 2 was a good enough proxy for Level 4. Clicks, conversions, repeat visits — reasonable signals because the interaction was structured. A funnel. Buttons. Predictable paths.

AI agents blow that up. Conversations are unstructured, variable, and don’t map to funnels. A 15-turn chat that looks successful might have left someone confused. A 2-turn conversation that looks like abandonment might have given them exactly what they needed. There’s no “like” button in a chat.

McKinsey’s “Trust in the Age of Agents” research (2025) found that 80% of organizations have already encountered risky behavior from AI agents. Their framing: “Agency isn’t a feature; it’s a transfer of decision rights.” When you transfer decision rights, you need to know if that’s working for the person — not just the system.

Not every product needs Level 4

Facebook’s newsfeed? Level 2 is enough. Low-stakes, high-volume. Trillion-dollar business on likes and time-spent. A resolution KPI for whether each post made you happy would be overkill.

And there’s academic evidence for why this is fine. Keiningham et al. published in the Journal of Marketing (2007) showing that changes in Net Promoter Score have almost no relationship with how customers allocate spending. A broader review by Dawes in the International Journal of Market Research (2024) confirmed: NPS is not a reliably superior predictor of growth compared with other satisfaction metrics. Behavioral proxies often tell you more than asking people how they feel.

For high-volume, low-stakes products — Level 2 isn’t a consolation prize. It’s the right answer.

Where Level 4 is genuinely needed

It comes down to stakes and trust. Harvard Business Review Analytic Services surveyed 603 business leaders (published Dec 2025 via Fortune) and found only 6% of companies fully trust AI agents to handle core business processes. 43% limit them to routine tasks only.

Financial advisory. An AI copilot drafts a client proposal. The advisor accepts it. But did they send it as-is, or silently rewrite it? A 2025 World Economic Forum / Capgemini report found 93% of financial advisors want final say over AI outputs. The question isn’t “was the proposal accurate” — it’s “did the tool build or erode trust?”

Healthcare. An AI suggests a treatment path. The doctor follows it. But were they confident, or busy and planning to second-guess later? A 2024 study in Frontiers in Digital Health found that even when clinical AI is accurate, adoption stalls without perceived trustworthiness. Accuracy doesn’t drive adoption. Confidence does.

Enterprise renewals. The contract went through, terms accurate, stakeholders notified. But does the customer feel like a partner or a line item? That feeling — not the contract accuracy — determines next year’s renewal.

Legal. An AI flags contract risks. The lawyer reads the output. Do they trust it enough to stop there? If they’re redoing the review, the AI didn’t resolve anything. It added a step.

The ice cream shop. No dashboard. No evals. The owner watches the kid’s face. Did the kid smile? Are they tugging their parent’s arm saying “can we come back tomorrow?” That’s the purest Smile Signal — unmeasurable, and yet the most powerful retention signal there is.

How would you actually measure Level 4?

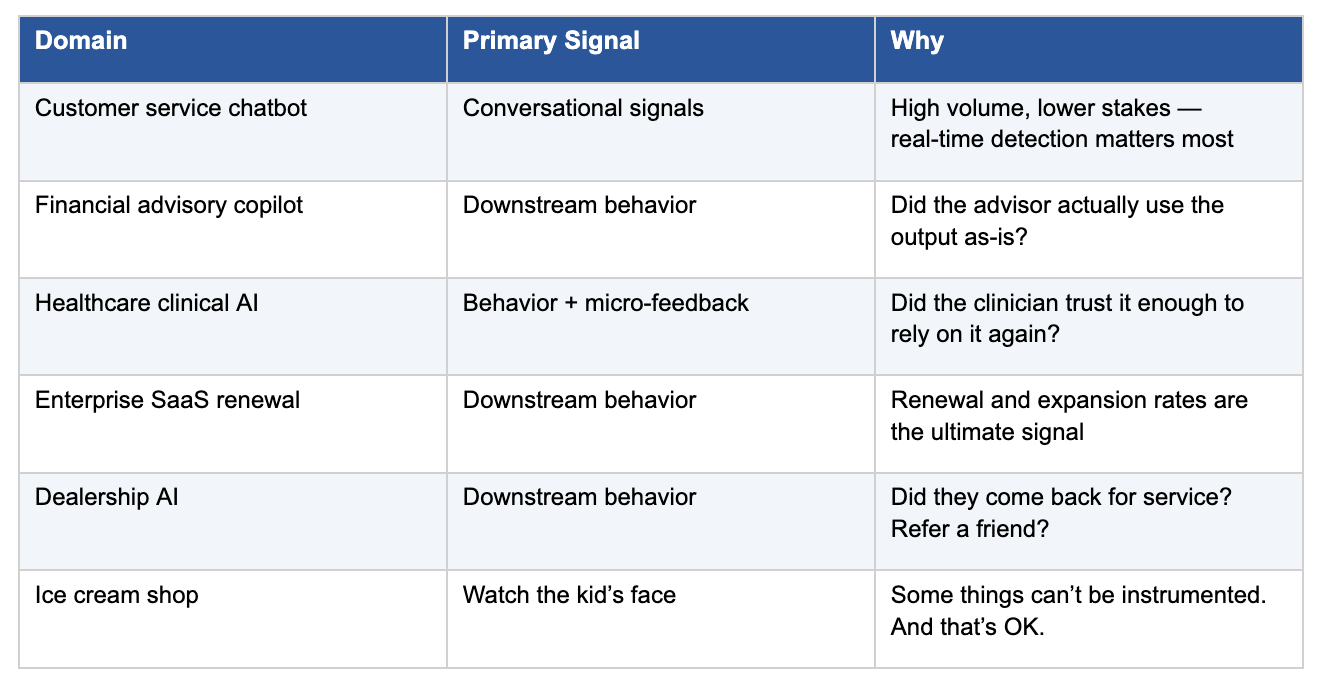

Nobody has cracked this. But I think it’s a composite — three signals, weighted by domain:

Conversational signals (real-time, weakest). Corrections, rephrasing, sentiment shifts. Useful but limited. The biggest blind spot: silent failure — the person who gets a bad answer and just quietly leaves.

Downstream behavior (lagging, strongest). Did the user come back? Did the advisor send the proposal without rewriting? Did the doctor follow the suggestion on the next patient too? Closest to ground truth, but requires instrumenting beyond the conversation.

Contextual micro-feedback (intermittent, calibrating). Not NPS. Matt Dixon, Nick Toman, and Karen Freeman made the case in “Stop Trying to Delight Your Customers” (HBR, July 2010) and later in The Effortless Experience — delight has negligible impact on loyalty; reducing effort matters far more. Gartner’s CES research puts a number on it: high-effort experiences make customers 96% more likely to become disloyal. Low-friction, in-the-moment feedback — a thumbs up, a “this wasn’t helpful” button — is better than any post-interaction survey

My take

For low-stakes, high-volume products — don’t over-engineer this. Level 2 works. It’s always worked.

For high-stakes, trust-dependent domains — finance, healthcare, legal, enterprise — Level 4 isn’t optional. It’s where competitive advantage lives.

The companies that figure out how to blend these signals — and know which ones to weight for their domain — will build the Smile Signal of agentic products. Except hopefully one that actually correlates with what it claims to measure.

Sources

Dixon, Toman, DeLisi — “Stop Trying to Delight Your Customers,” Harvard Business Review, July 2010

Keiningham, Cooil, Andreassen, Aksoy — “A Longitudinal Examination of Net Promoter and Firm Revenue Growth,” Journal of Marketing, 2007

Dawes — “The Net Promoter Score: What Should Managers Know?” International Journal of Market Research, 2024

McKinsey — “Trust in the Age of Agents,” 2025

Harvard Business Review Analytic Services — AI Agent Trust Survey, Dec 2025 (via Fortune)

World Economic Forum / Capgemini — AI in Wealth Management, 2025

Frontiers in Digital Health — Trust in Clinical AI Systems, 2024

Gartner — Customer Effort Score Research; Agentic AI Predictions, 2025

Microsoft Dynamics 365 Blog — “Multimodal Agent Score,” February 2026

Where have you seen the gap between “the system worked” and “the person felt served”

?